Consumers have a right to have informative yet easy-to-use nutrition labelling, and effective labelling is one tool to help control the epidemics of obesity and diabetes. Everyone agrees that on its own, the current Nutrition Information Panel (NIP) used in NZ does not achieve the goal of facilitating healthier food choices. But suggestions around more consumer-friendly front-of-pack labels have been fiercely contested by industry and health stakeholders – until now, it seems. Is the new Health Star Rating label truly a win-win consensus, or might too much have been given away to reach a compromise?

The development of this label occurred outside the usual regulatory channels, using a collaborative design process involving several committees with members from government, industry, academia, health and consumer organisations in Australia.

This came about after Ministers from Australian and New Zealand Governments accepted an expert panel’s recommendation for interpretive front-of-pack nutrition labels (FOPL), but rejected the advice to introduce the Multiple Traffic Light (MTL) label system (1).

Ministers expanded on this decision, saying they had:

“…concluded that there is currently not enough evidence to demonstrate that any of form of front-of-pack labelling, including traffic light labelling … [would help consumers] make informed choices”. (2)

Many were incredulous that the Ministers claimed a lack of evidence as grounds for their decision:

But the president of the Australian Medical Association, Steve Hambleton, said he could not understand how the government could cite ”lack of evidence” as a reason not to back traffic lights, given it had been recommended by the inquiry ”expressly commissioned to investigate the evidence”.

More incredible still, the Ministers now endorse a system that has not been subject to any comparative research. This is a wholly inadequate situation; there is no robust evidence that the Health Star Rating (HSR) is the most appropriate FOPL system to shift behaviour towards more healthy choices, as was first demanded.

Clearly, evidentiary standards changed during the process, and it seems likely the mandate to achieve consensus between factious health and industry groups played a role. This raises questions how much power various stakeholders were able to exert during a minimally-transparent process with no opportunity for public comment.

While four studies were conducted in Australia (3) and NZ (1), none of these tell us if the HSR is a more effective format than the MTL, or other labelling systems. It is also important to note the final format announced in June 2014 is not the same design that was tested throughout 2013. Several industry representatives redesigned the label sometime in 2014, and worryingly, this final design actually fails to align with some of the initial research findings.

Though the final HSR design is apparently preferred by consumers, information about how this conclusion was reached is not provided. But much more importantly, consumer preference has repeatedly been shown to be a very poor predictor of ability to use nutrition information (3-6). Similarly, intentions are poor predictors; 90% of consumers in the US (7-9) and NZ (10) predicted they’d regularly use numeric nutrition tables, and unfortunately we know high usage rates never transpired. Relying on consumers’ estimations or self-reports of future behaviour is far from sufficient grounds for approving the design.

In short, we do not know whether the new label is strong enough to disrupt habitual behaviour patterns and make a meaningful difference to improving dietary choices. Conversely, we know people are triggered to action by red lights (11-13). Choice experiments reveal that MTL labels shift consumers’ preferences away from energy-dense foods and, in particular, help consumers who don’t often use the NIP to make decisions like those who do use it (14-16). MTLs also encourage consumers with low levels of dietary self-control to choose less energy-dense foods (17), and this is an important segment to influence. Since the recommendation to adopt MTLs was rejected, evidence has continued to accumulate showing its utility (16). Bizarrely, several European Union countries are threatening the UK with legal action following the voluntary introduction of MTLs, because they are “clearly influencing consumer choice”.

We would be wise to remember that over ten years ago, our regulators assured us that the NIP would be the catalyst for dietary change (18,19). This format was chosen despite earlier research conducted for the Ministry of Health detailing why much simpler labels were needed (20) – and hindsight offers the gift of illuminating faulty decision making. What will we say in five years’ time, at the end of this new episode in food labelling history?

We lack the rationale to justify ‘waiting and watching’ as a reasonable approach to the urgent health problem of the epidemics of obesity and diabetes. While a short-lived NZ Advisory group ruled the MTL out of contention through its guidelines (“Focus on the whole food, not specific nutrients”) (21), the MTL still fits with the parameters first established by the Ministers. We need rigorous experimental research that compares the final HSR format to other FOPL initiatives, including putting MTLs back among regulatory options, to assess whether the HSR has the potential to induce positive changes in purchasing behaviour.

The Starlight trial being conducted within the HRC-funded DIET programme will provide preliminary evidence on the relative merits of Stars vs Traffic Lights. However, the optional access to labels via a smartphone app has some limitations, and using an assortment of research methods will be necessary. For example, discrete choice experiments using a wide array of food categories would provide strong indications about the relative strength of alternative labels. And even better evidence would come from in-store trials using shelf-tags, using matched intervention and control stores.

Poor diets will soon overtake tobacco as the leading cause of disease in New Zealand (22), so we simply can’t afford to keep missing opportunities for meaningful action.

Appendix with further detail

A brief history of how we got the Health Star Rating label

In 2009, an expert panel was appointed to review trans-Tasman food labelling law and policy; they examined the international research literature and conducted two rounds of public consultation. The panel’s comprehensive report, “Labelling Logic”, contained 61 recommendations, including several pertaining to nutrition labelling (1). First:

Recommendation 50: That an interpretative front-of-pack labelling system be developed that is reflective of a comprehensive Nutrition Policy and agreed public health priorities.

The NIP is non-interpretive: it simply states the facts, leaving individual consumers to decide what, for example, 12.1 grams of saturated fat per 100 grams means for their health. An interpretive label ‘digests’ this information by combining it with nutritional science, and communicates the meaning via easily recognised visual heuristics.

The panel went further, and concluded that there was good evidence to recommend adopting the Multiple Traffic Light (MTL) label:

Recommendation 51: That a multiple traffic lights front-of-pack labelling system be introduced. Such a system to be voluntary in the first instance, except where general or high level health claims are made or equivalent endorsements/trade names/marks appear on the label, in which case it should be mandatory.

While the Ministers agreed with Recommendation 50, they declined to adopt MTLs:

The implementation and monitoring of any FoPL system cannot commence until the type of system is agreed. While recommendations 51–55 pre-suppose that a MTL FoPL system will be implemented, this is pre-emptive of the outcome of recommendation 50. The MTL system is only one approach to interpretive FoPL, and all other approaches need to be considered before recommendations 51–55 can be considered (23).

After this, the countries parted ways and each set about establishing government-led working groups to investigate possible interpretive front-of-pack nutrition labels. The NZ group reported a set of General and Design principles to former Minister of Food Safety, Kate Wilkinson, in November 2012 (21). However, the NZ Government then chose to wait and watch Australian developments, eventually opting to adopt their system.

What research has been done on the Health Star Rating?

Current Food Safety Minister Nikki Kaye issued a media release saying “The system has been robustly tested … The Health Star Rating system is backed by research internationally and in New Zealand.” This could be considered misleading: the HSR label is new, and has never been tested outside Australia or NZ. Four studies were completed during development (3 in Australia, 1 in NZ), but none tested the actual format announced in June 2014. Worryingly, the final format even ignores findings from those studies about which design elements consumers found most useful. The studies are briefly summarised here, and I can be contacted for further details on the limitations of these studies.

The first Australian study used qualitative methods to investigate consumers’ current information-search behaviours. It also sought participants’ reactions to alternative star-rating label design ideas, from which a set of design guidelines were generated. The second used an online survey to measure consumers’ self-rated ability to understand different elements based on these guidelines. The results of this study were used to generate a Health Star Rating tested in the third experiment:

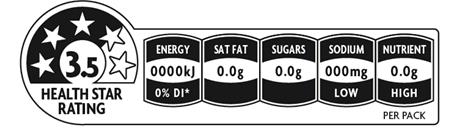

Figure 1 – The original HSR label design tested in 2013

That final Australian study involved a large simulated shopping experiment, which was conducted to measure whether adding the HSR label (or a reduced version showing only the Stars and Energy tab) caused respondents to change their purchase behaviour. It found people said they’d switch to buying more highly rated products, and fewer lowly rated products, with the threshold being around 2.5-3.0 stars. However, as I explain in the detailed section at the end, the experimental design had flaws that raise questions about the reliability of these results.

One study was conducted in NZ, to test whether consumers could use three versions of the health star label to identify healthier options within two pairs of substitutable foods. Overall, people were about 50% more likely to identify the more highly rated choice with a stars label, compared on when only the back of pack label was present.

According to Professor Winsome Parnell, the Australian FOPL group “settled on the star rating system quite early in its deliberations” (24). The research methods used certainly appear designed to find support for this system, particularly by failing to compare it to other interpretive formats.

Is there anything else like the Health Star Rating we can compare to?

There is only one summary stars rating label in use, and that is the Guiding Stars shelf-tag label used by about 2,000 stores in the US and Canada, introduced in 2007. The Guiding Stars algorithm is somewhat similar to the HSR in that foods get points from positive nutrients and lose them for negative nutrients per 100g. Foods are rated from zero to three stars, and the rating appears next to the price on the shelf-tag.

The Guiding Stars system has reportedly shifted sales towards items with more stars and away from lower-rated foods (25,26), and a simulation study suggests this is having positive effects on overall nutrient-intake obtained from breakfast cereals. However, a large number of products don’t qualify for any stars, and two years after launch, these made up three-quarters of all items purchased (26). Therefore, it is still unclear whether the system is improving shoppers’ total diets.

Why might the HSR not influence purchasing patterns?

Grocery shopping is a highly routinised behaviour that people tend to complete quickly (27-29), and shoppers habitually purchase from product portfolios that usually contain two to five favoured brands in each category (30). An effective label has to be strong enough to disrupt our normal ‘autopilot’ mode by standing out from the other persuasive labelling elements, to make us stop and consider whether there is a better choice.

Here is a brief summary of some of my concerns about whether the HSR has the potential to shift shoppers’ behaviour:

1. It may not produce enough variation in scores: According to Dr Mike Rayner, who led development of the rules underpinning the rating calculator (31), scores awarded across all foods roughly follow a normal distribution. That means about two-thirds of all foods will have scores that centre on the middle of the scale (perhaps a range of 2.0–3.5), and few will have very high or low ratings (in an initial pilot of the scheme, only 3/260 products rated 5 stars).

It might then seem that the bulk of foods are roughly interchangeable, and rated at a level that doesn’t prompt much switching according to the simulation study. However, these foods could still have meaningful differences in some nutrients – that’s because foods earn and lose points across several criteria. On balance these foods have roughly equal amounts of ‘good’ and ‘bad’ things going for them, and consumers will have to use extra information to make further comparisons if they care about where their calories come from. Will shoppers be able to do this with these labels for that purpose? See point 4 below.

It’s also worth noting that the star rating tends to produce a slightly more favourable view of foods’ healthiness than the traffic light system (32).

2. The most prominent part of the heuristic is static: Every label always has five visible stars of the same colour, irrespective of the food’s rating. The rating is communicated by changing the amount of background shading, and the number printed in the middle. Disconcertingly, the stars actually appear smaller as the rating increases, as their border is absorbed into the background. This clearly fails good design principles of simplicity and perceptibility. The number of stars awarded should overtly change across ratings (i.e. a two-star food displays only two stars), so the visual impact is obvious; this is how the Guiding Stars icon works.

This is one of the big changes from the designs tested. Originally, the stars switched from ‘hollow’ to ‘solid’ as the ratings increase, and the background remained white. Furthermore, the rating number shifted along the slider bar, yielding a second visual indication of the rating. This feature was deemed important in the early research, so it is unclear why this finding has been ignored.

3. Because it’s voluntary, consumers won’t be able to compare all options: The availability of HSR labels will be patchy, probably especially so among energy-dense foods. As lawyers working for the industry have commented:

“They [food producers] now face three choices – publish the star rating on packaging based on current product formulations, reformulate their products to improve their star rating, or ignore the star rating system.”

While some manufacturers have adopted the industry’s own Daily Intake Guide (DIG) label, others haven’t – presumably, many of these companies will remain in the ‘ignore’ camp.

This means consumers are going to face a choice array that contains no front-of-pack labels, the DIG (which my research shows is no better than the NIP) (14,15,33), and different versions of the HSR (see next point). This will make comparisons very challenging, and sharply reduces the likelihood that different choices will be considered.

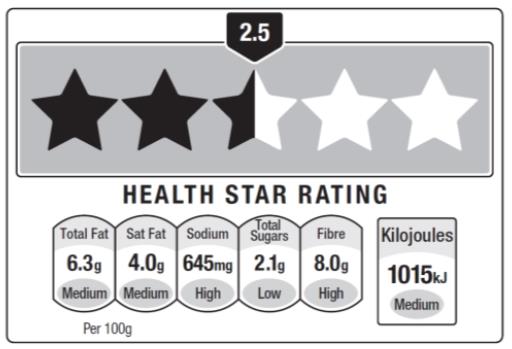

4. Even when used, the HSR design is not consistent: Five versions of the HSR label have been sanctioned — including one with no stars!

- HSR + (dietary) energy icon + 3 prescribed nutrient icons + 1 optional nutrient icon.

- HSR + energy icon + 3 prescribed nutrient icons.

- HSR + energy icon.

- HSR (e.g. when pack size does not accommodate more complete versions).

- Energy icon (e.g. for small pack sizes such as for some confectionery products).

For the nutrient icon tabs, manufacturers can present the information per 100mL/g or per pack. This is at least an improvement on the DIG, which presented highly variable per-serving information, but might still allow for confusing comparisons.

Returning to the research described earlier, the tested designs actually incorporated the fundamental feature of the MTL in the nutrient tabs – the levels were rated as low, medium or high (using those adjectives, not colours). Again, we see the final design doesn’t comply with testing recommendations, and this will almost certainly reduce the label’s efficacy.

Now the word ‘high’ will only appear for the positive nutrient, and ‘low’ against saturated fat, sugars and sodium – thus, the industry can now build nutrition-content claims right into the label. Using the descriptors ‘medium’ and ‘high’ for negative nutrients was obviously still unacceptable to industry, despite (or due to?) clear evidence that consumers want to minimise purchase of foods with ‘red-light’ ratings (11,12).

Finally, a comment on the cost-to-implement estimates

The industry has claimed that it will cost up to $200 million to implement the HSR. By comparison, implementing the Daily Intake Guide has cost $72 million (others say only $40 million), while the consultants PWC estimate the five-year cost of the HSR at $40.5 million for industry (34). A confidential Member Briefing document (dated 22/01/14) from the Australian Food and Grocery Council provides insight into what the $200 million sum includes:

The costs (direct and indirect) of implementing the labelling proposals should be carefully considered and estimates provided to the consultants. Along with the direct costs of changes to packaging, companies should consider ongoing and indirect costs such as the value of label space that will have to be reserved to carry the star-rating panel; ongoing monitoring and assurance costs; implications for exports or import competition; and consistency with global practice.

Thus, it includes the opportunity costs of not being able to use that space for marketing, as well as costs that manufacturers must already factor in to their operations regardless of this label.

The industry says that FOPLs will hit small companies the hardest. However, one such company went public when they made the decision to introduce the star label early. The small Monster Health Food Co, which makes seven types of muesli products, told reporters their direct costs were less than $600 per product much less than the $5,000 -15,000 per item claimed by the Australian Food and Grocery Council (35).

Cost is used as a reason not to make FOPLs compulsory, but this does not appear to stack up as a reason for keeping labels voluntary.

Author: Dr Ninya Maubach ([email protected])